#gpu-acceleration

46 episodes

#2622: How Transformers Actually Work: Attention, Tokens, and Context

How one architectural change unlocked chatbots, image generation, and protein folding — explained without the jargon.

#2517: How Unsloth Makes LLM Fine-Tuning 2x Faster

Unsloth cuts memory usage by 50-70% and speeds up training 2.2x for models like Llama 3 and Mistral.

#2495: How to Bake Personality Into an LLM in 15 Minutes

Fine-tune a model's personality with ~300 examples and a consumer GPU. SFT + DPO explained.

#2464: Batch APIs: The 50% Discount You're Probably Misusing

Batch inference APIs offer 50% off — but only for the right workloads. Here's when they actually make sense.

#2456: Choosing Between AI Cloud Providers

A practical guide to choosing between Modal, RunPod, Nebius, and Baseten for AI workloads.

#2432: From RTL to GDSII: How Custom Silicon Is Designed

The economics and engineering of ASICs vs. CPUs and GPUs, from transistor placement to hyperscaler strategy.

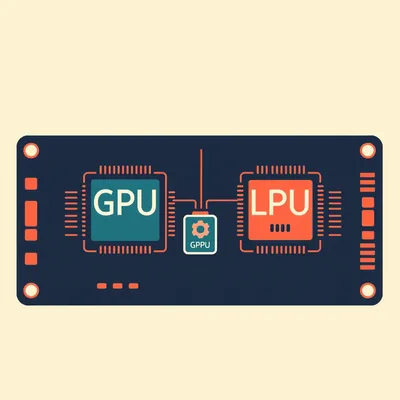

#2431: The 3 Markets in an AI Trench Coat

GPUs, LPUs, and ASICs: why the best hardware for AI depends entirely on what you're trying to do.

#2376: Iran’s Crypto Sanctions Workaround

How Iran turns cheap electricity into cryptocurrency to bypass sanctions—and the tradeoffs of this digital alchemy.

#2177: Skip Fine-Tuning: Shape LLMs With Alignment Alone

Can you build a personalized LLM by skipping traditional fine-tuning and using only post-training alignment methods like DPO and GRPO? We break dow...

#2115: Why AI Answers Differ Even When You Ask Twice

You ask an AI the same question twice and get two different answers. It’s not a bug—it’s physics.

#2065: Why Run One AI When You Can Run Two?

Speculative decoding makes LLMs 2-3x faster with zero quality loss by using a small draft model to guess tokens that a large model verifies in para...

#2063: That $500M Chatbot Is Just a Base Model

That polite chatbot? It started as a raw, chaotic autocomplete engine costing half a billion dollars to build.

#2017: That Q4_K_M Is Not a Cat Sneeze

Those cryptic letters on Hugging Face actually map how much brain power you trade for speed.

#1992: Israel's 4,000-GPU National Supercomputer

Israel is building a sovereign AI supercomputer with 4,000 Nvidia B200 GPUs to keep startups local.

#1940: Why Google's 31B Model Fits in Your GPU

Google just dropped Gemma four, and its 31-billion-parameter size is a masterclass in hardware-aware AI design.

#1820: Renting vs. Owning GPUs: The Break-Even Math

Is it cheaper to rent serverless GPUs or buy your own hardware? We break down the math on utilization, depreciation, and hidden costs.

#1809: The TTS Developer's Dilemma: Size vs. Speed

Stop guessing. We break down the critical trade-offs between model size, latency, and sample rate for production-ready voice apps.

#1807: Why GPU Containers Force You to Build

Docker promised "run anywhere," but GPU images make you compile for hours. Here’s why the abstraction breaks down.

#1806: Why Mac Minis Are Eating AI's Hardware Race

Apple Silicon's unified memory is crushing traditional GPUs for local LLMs. Here's why the M4 Mac Mini is the new king of affordable AI hardware.

#1752: Whisper Small Beats Whisper Large in Speed & Accuracy

A 4GPU benchmark on Ubuntu shows the 1.5B parameter Whisper Large is slower and less accurate than the tiny Whisper Small.

#1534: The Rise of the Agentic Terminal: Beyond the Command Line

Stop drowning in terminal tabs. Discover how tools like Zellij and Ghostty are transforming the command line into an Agentic Development Environment.

#1224: Cracking the CUDA Code: NVIDIA’s Software Dominance

Discover why NVIDIA’s CUDA is the oxygen of the AI industry and how tools like OpenAI’s Triton are finally challenging its 20-year software moat.

#1109: The T-FLOP Trap: Measuring the Power of Modern AI

Are teraflops the "horsepower" of AI, or just a marketing gimmick? Explore why raw compute speed isn't the whole story in the race for AI power.

#1102: Beyond the Boost: Mastering Modern GPU and RAM Tuning

Is manual hardware tuning still worth it? Discover why undervolting and curve optimization are the new secrets to peak PC performance.

#1081: The K-V Cache: Solving AI’s Invisible Memory Tax

Why does your AI get slower as you chat? Discover the K-V cache, the invisible bottleneck of generative AI, and how we're fixing it in 2026.

#1021: Python: The Accidental King of Artificial Intelligence

Why did a 1980s hobby project become the backbone of AI? Explore the history of Python and the chaos of modern dependency management.

#675: The Intelligence Factory: How AI is Rebuilding the Cloud

From liquid cooling to nuclear power, Herman and Corn explore how AI is transforming data centers into high-density "intelligence factories."

#663: Workstation vs. Consumer: The Real Cost of Power

Is a high-end desktop enough, or do you need a workstation? Herman and Corn break down the "three pillars" of professional hardware.

#633: Memory Wars: The Future of Local Agentic AI

Can your PC handle the next wave of AI agents? Herman and Corn dive into VRAM, quantization, and the future of running LLMs locally.

#484: The Silicon Sharing Economy: Inside Serverless GPUs

How do small teams run massive AI models without $50,000 chips? Corn and Herman dive into the hidden plumbing of serverless GPU providers.

#170: The Heavy Metal of Machine Learning: Inside PyTorch

Discover why PyTorch is the "oxygen" of AI. Herman and Corn explore its history, the magic of Autograd, and the move to the PyTorch Foundation.

#162: Beyond the Desktop: Defining the 2026 Workstation

Is your PC a workstation or just a fast desktop? Herman and Corn break down the hardware that defines professional computing in 2026.

#110: Building the Ultimate Local AI Inference Server

Learn how to build a high-performance local AI server for agentic coding, from dual-GPU PC builds to the power of Mac's unified memory.

#84: The Silicon Arms Race: Why GPUs are the New Oil

Are high-end microchips the new enriched uranium? Herman and Corn dive into the high-stakes world of GPU export bans and global AI supremacy.

#82: Why GPUs Are the Kings of the AI Revolution

From video game dragons to digital brains: Herman and Corn explain why your graphics card is the secret engine behind the AI boom.

#56: Building an AI Model from Scratch: The Hidden Costs

Building an AI model from scratch? It's a brutal reality of trillions of tokens and millions in GPUs. Discover the hidden costs of modern AI.

#55: Running Video AI at Home: The Real Technical Challenge

Video AI: Hype vs. Reality. Can your GPU handle it? We dive into the technical challenges of running video AI at home.

#34: Red Team vs. Green: Local AI Hardware Wars

NVIDIA's CUDA rules AI, leaving AMD users battling a "green wall." Explore the hardware wars and thorny paths forward.

#31: ComfyUI: Power, Polish, & The AI Creator's Frontier

ComfyUI: Unlocking AI's true power, but is your rig ready? Dive into the future of digital artistry.

#27: AMD AI: Taming Environments with Conda & Docker

Tired of AI environment headaches on AMD? We demystify Conda, Docker, and host environments to unlock your GPU's full potential.

#25: GPU Brains: CUDA, ROCm, & The AI Software Stack

Unraveling how GPUs power AI. We dive into CUDA, ROCm, and the software stack that makes it all think.

#18: Beyond the GPU: Unpacking AI's Chip Revolution

Beyond the GPU: we're unpacking AI's chip revolution. Discover the crucial, often overlooked world of AI's fundamental building blocks.

#17: Cloud Render Superpowers: Local Edit, Remote Muscle

Unleash cloud superpowers! Edit locally, render remotely with AI-accelerated GPUs like NVIDIA A100s.

#12: The AI Breakthrough: Transformers & The Perfect Storm

AI's everywhere. How did chatbots, art, and video all emerge so suddenly? The secret lies in Transformers and a perfect storm.

#6: How To Fine Tune Whisper

Build your own AI transcription tool! We'll walk you through fine-tuning Whisper, from data to notebook.

#2: Local STT For AMD GPU Owners

AMD GPU? No problem! Dive into local AI adventures like on-device speech to text.