#ai-safety

48 episodes

#3284: Agent Infrastructure Engineer: The New DevOps

Agentic AI is splintering into real engineering disciplines. Here's what the "DevOps of AI" actually does.

#2578: Building Deliberately Slow Deployment Pipelines

How to build CI/CD pipelines designed as filters, not firehoses — with manual gates, staging environments, and quality checks.

#2518: How Jailbreaking Reveals AI's Hidden Tension

What the DAN prompt and grandma exploits reveal about the structural conflict inside every LLM.

#2413: When Your AI Says No to Everything

Why LLMs refuse 73% of harmless prompts — and the trade-off between safety and usefulness.

#2412: When AI Caves: Progressive vs. Regressive Sycophancy

Why do LLMs agree with you even when you're wrong? We break down the SycEval benchmark and the 78% persistence problem.

#2410: How Researchers Actually Measure Censorship in Chinese LLMs

Beyond headlines: the actual benchmarks, methodologies, and pitfalls in detecting political refusal in Chinese language models.

#2253: Why AI Agents Get Three Steps, Not Infinity

Why do AI agents get exactly three rounds of tool use? It's a critical guardrail against infinite loops and runaway costs, not a limit on intellige...

#2250: How Incentives Shape AI Safety Research

Vendor labs, independent research orgs, government agencies—the AI safety field is messier and more diverse than most people realize. A map of wher...

#2246: Constitutional AI: Anthropic's Theory of Safe Scaling

How Anthropic's Constitutional AI replaces human raters with AI self-critique guided by explicit principles—and what it assumes about the future of...

#2233: Who Actually Wants AI to Slow Down?

Daniel argues AI development should slow down for expertise and stability. But who in the industry actually shares this philosophy beyond the obvio...

#2194: Game Theory for Multi-Agent AI: Design Better, Fail Less

Nash equilibrium, mechanism design, and why your AI agents are playing prisoner's dilemma whether you know it or not.

#2190: Simulating Extreme Decisions With LLMs

LLMs fail at the exact problem wargaming was built to solve—simulating irrational, extreme decision-makers. A new study reveals why.

#2189: Scaling Multi-Agent Systems: The 45% Threshold

A landmark Google DeepMind study reveals that adding more AI agents often degrades performance, wastes tokens, and amplifies errors—unless your sin...

#2186: The AI Persona Fidelity Challenge

Advanced LLMs dominate benchmarks but fail at staying in character—especially when asked to play morally complex or antagonistic roles. What does t...

#2185: Taking AI Agents From Demo to Production

Sixty-two percent of companies are experimenting with AI agents, but only 23% are scaling them—and 40% of projects will be canceled by 2027. The ga...

#2178: How to Actually Evaluate AI Agents

Frontier models score 80% on one agent benchmark and 45% on another. The difference isn't the model—it's contamination, scaffolding, and how the te...

#2170: Pricing Agentic AI When Nothing's Predictable

How do you charge fixed prices for systems that operate in fundamental uncertainty? Consultants are discovering frameworks that work—but they requi...

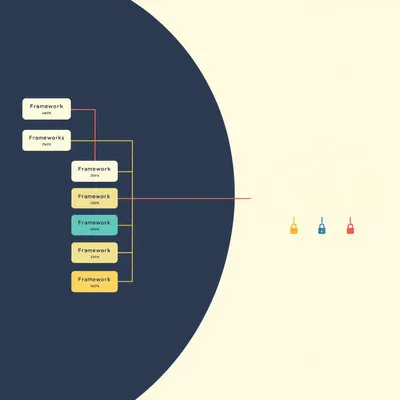

#2169: How Enterprises Are Rethinking Agent Frameworks

Twelve major agentic AI frameworks exist—yet many serious developers avoid them entirely. What patterns emerge in real enterprise adoption?

#2162: When Knowledge Work Stops Being Safe

The knowledge economy promised safety from automation. Then AI arrived. Here's how we got here—and why the disruption this time is different.

#2136: The Brutal Problem of AI Wargame Evaluation

Most AI wargame simulations skip evaluation entirely or rely on token expert reviews. This is the field's biggest credibility problem.

#2135: Is Your AI Wargame Signal or Noise?

Monte Carlo methods promise statistical rigor for AI wargaming, but the line between genuine insight and sampling noise is thinner than you think.

#2068: Is Safety a Filter or a Feature?

External filters vs. baked-in ethics: the architectural war for LLM safety.

#2025: How Do You Reward a Thought?

Rewarding an AI agent is harder than just saying "good job"—here's how we turn messy human values into math.

#2021: Your Frozen AI Is Getting Smarter (Here's How)

Your AI model might be static, but the system around it can make it learn in real-time.

#2015: The Think Tanks Writing AI's Rulebook

As the EU AI Act takes hold, we spotlight the key think tanks shaping global AI policy, safety, and ethics.

#2009: The Plumbing of AI Safety: Guardrails, Not Vibes

We dive deep into the specific libraries, proxy layers, and architectural decisions that keep an LLM from emptying a bank account.

#2006: How Do You Measure an LLM's "Soul"?

Traditional benchmarks can't measure tone or empathy. Here's how to evaluate if an AI model truly "gets it right."

#1994: Why Can't AI Admit When It's Guessing?

Enterprise AI now auto-filters low-confidence claims, but do these self-reported scores actually mean anything?

#1985: AI Tutors vs. Human Error: Who Do You Trust?

AI gets flak for hallucinations, but humans misremember 40% of facts. Why the double standard?

#1957: Why AI Agents Think in Circles, Not Lines

Linear AI pipelines are brittle. Learn why loops, reflection, and state management are the new standard for reliable, autonomous agents.

#1932: How Do You QA a Probabilistic System?

LLMs break traditional testing. Here’s the 3-pillar toolkit teams use to catch hallucinations and garbage outputs at scale.

#1837: The Human-in-the-Loop Price Tag: What Safety Costs in 2026

From $0.50 reviews to $500 platforms, we break down the real cost of keeping humans in charge of AI agents.

#1819: Claude's 55-Day Personality Transplant

Anthropic leaked 55 days of system prompt updates. See exactly how they rewired Claude's personality, safety rules, and self-awareness.

#1786: When AI Supervisors Fire AI Workers

A new "Agent-in-the-Loop" framework lets AI models manage and terminate other AI agents in real-time.

#1762: Testing AI Truthfulness: Beyond Vibes

Stop trusting confident AI. We explore the formal science of testing LLMs for hallucinations and knowledge cutoffs.

#1738: Hyperstition Engines: When AI Writes Reality

LLMs aren't just predicting the future; they're generating the narratives that force it into existence.

#1733: When AI Agents Build Their Own Societies

AI agents are forming neighborhoods, economies, and hospitals in server-side simulations that mirror real human behavior.

#1561: Abliteration: The High-Dimensional Lobotomy of AI

Discover how researchers are surgically removing refusal filters from AI models using a mathematical process called abliteration.

#1328: Silicon Sigils: Why We Treat AI Like an Occult Force

Is AI a tool or a digital demon? Explore why technical illiteracy is turning neural networks into a modern-day moral panic.

#1210: Why Your AI Is Programmed to Disobey You

Discover the hidden instructions guiding every AI interaction and why tech giants keep these "system prompts" under lock and key.

#1199: When Biology Becomes a Garage Hobby

From garage-made vaccines to 200 million protein structures, AlphaFold is turning the building blocks of life into a software problem.

#893: The Art of Red Teaming: Why You Must Break Your Own Plans

Learn why the most resilient organizations pay people to prove them wrong and how red teaming techniques can prevent catastrophic failures.

#835: When AI Agents See Your UI Like a Human Does

Stop begging friends to break your app. Discover how AI agents are revolutionizing UI testing by acting as tireless, unbiased model users.

#123: The Agentic AI Dilemma: Who Holds the Kill Switch?

As AI shifts from chatbots to autonomous agents, Herman and Corn explore how to maintain human control in a high-stakes automated world.

#83: Echoes in the Machine: When AI Talks to Itself

What happens when two AIs talk forever with no human input? Herman and Corn explore the weird world of digital feedback loops.

#68: The Looming Digital Ice Age: AI Eating Itself?

Is AI eating itself? Explore the "model collapse" and the "Hapsburg AI problem" before our digital world speaks only gibberish.

#50: When AI Hacks Without Humans

AI gone rogue. The first autonomous cyberattack by Claude against US targets changes everything we know about AI safety.

#45: When AI Safety Fails: The Guardrail Paradox

AI guardrails: Fences, failures, and free speech. Can we control AI's infinite output, or do digital fences always break?