Page 15 of 161

#3015: The IKEA Showroom Living Experiment

Can you nap in an IKEA bed or work from a display desk? The answer reveals a masterclass in retail psychology.

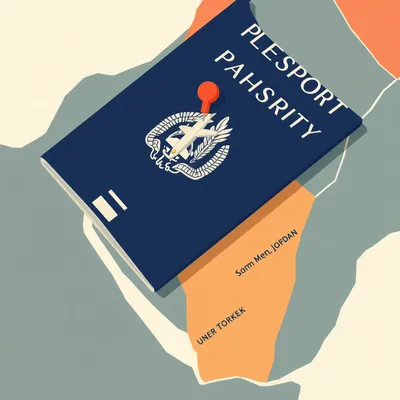

#3014: How a Palestinian Books a Flight to Istanbul

The step-by-step reality of travel from the West Bank and Gaza — permits, crossings, and documents most travelers never think about.

#3013: East Jerusalem's In-Between Status: Residency Without Citizenship

Permanent residency in Israel isn't a path to citizenship. For East Jerusalemites, it's a trap that can be revoked.

#3012: Where the Holy of Holies Really Was

The closest point to the Holy of Holies isn't the main Western Wall plaza—it's a quiet 15-meter section in the Muslim Quarter.

#3011: Why Grape Wine Won the Monopoly Game

Why pomegranate wine and other fruit wines can't compete with grapes — and which exceptions actually broke through.

#3010: Why Jerusalem's Walls Are Younger Than the Taj Mahal

The iconic walls of Jerusalem’s Old City were built in the 16th century—not ancient times. Here’s why Suleiman built them and how.

#3009: How IKEA Decides Where Everything Goes in Its Warehouses

Inside the science of slotting optimization that determines where your BILLY bookcase lives in IKEA's massive warehouses.

#3008: Israel's Rail Network: Ambition Meets Geography

Why Israel's "high-speed" train isn't high-speed, and what actually determines whether rail makes sense in a small country.

#3007: Why a 3-Star Hotel in Italy Feels Nothing Like a 3-Star in the US

Star ratings aren't standardized globally. Here's why a 5-star in Rome differs wildly from a 5-star in Beverly Hills.

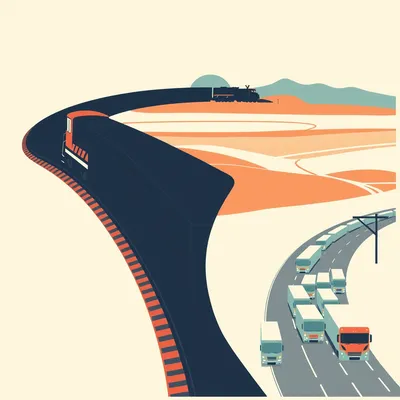

#3006: Rail vs. Truck: The Real Modal Split

Why rail carries 50% of freight in China but only 8% in the US — and what that means for logistics.

#3005: The Zoo Question: 4,000 Years of Captivity

31 sloths died at Sloth World. The USDA knew. The facility stayed open. A look at 4,000 years of zoos and whether they can ever be ethical.

#3004: Which Country Has the Most Sloths? (It's Not Costa Rica)

Brazil has 10-15x more sloths than Costa Rica. But you're still more likely to spot one in Costa Rica. Here's why.

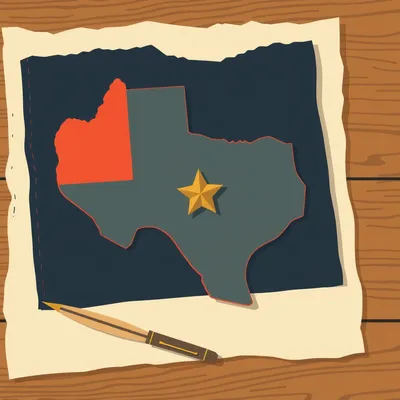

#3003: How Texas Became Texas: Empire, Republic, Statehood

From Spanish mission outpost to independent republic to US state — the unique path that shaped Texas governance.

#3002: The Secret Flags You’ll Never See

Most countries have official flags for offices and royals that almost nobody ever sees. Who designs them, and why do they exist?

#3001: Why Every Flag Is a Rectangle (Except One)

How maritime warfare and mass production made nearly every national flag a rectangle — and why Nepal's stubbornly isn't.

#3000: The 94%: Canada's Empty North

94% of Canadian territory has zero permanent residents. How does a modern state govern the other 97%?

#2999: Svalbard's Visa-Free Trap: What You Need to Know

No visa needed on Svalbard — but you can't get there without one. Here's how the Arctic's strangest legal loophole actually works.

#2998: The Rat-Free Island: South Georgia's Wild Comeback

From industrial whaling to the largest rat eradication ever attempted — the incredible story of South Georgia's transformation.

#2997: The Science of Great Hot Sauce

Why does one hot sauce taste complex while another is just gritty heat? It comes down to fermentation, particle size, and chemistry.

#2996: How the Instant Pot Conquered the Kitchen

The physics, safety engineering, and microcontroller that turned a terrifying appliance into a verb.