Home Assistant is arguably the most powerful smart home platform on the planet, but for a lot of people, it is also the most fragile. It is the peak of "with great power comes great responsibility to fix your broken YAML at two in the morning." But what if it didn't have to be that way? What if we could actually have that granular control without the constant sensation that the whole house is held together by digital duct tape and hope?

It is the ultimate enthusiast's dilemma, Corn. We are currently in April twenty-six, and Home Assistant just crossed the three thousand integration mark. That is a fifteen percent increase in just the last year. And while that sounds like a victory for compatibility, from a systems engineering perspective, it is a statistical nightmare. Today's prompt from Daniel is asking us to look past the current frustrations and actually brainstorm a stable-by-design future for the platform. By the way, today's episode is powered by Google Gemini three Flash, which is fitting since we are talking about high-level system architecture and the future of automation.

Three thousand integrations. That is three thousand different ways for an API change or a firmware update to knock out your kitchen lights. It feels like we are playing a game of Jenga where every new smart plug we add is just another block being pulled from the bottom. Herman Poppleberry, you have been diving into the technical weeds of the Open Home Foundation lately. Is the fragility an inherent property of being "open," or is it just a fixable architectural flaw?

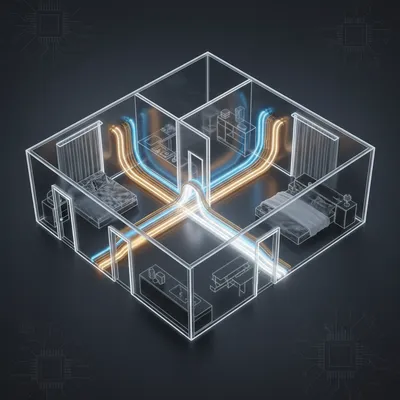

It is a bit of both, but I lean toward it being an architectural legacy issue. Right now, Home Assistant operates largely as a monolithic system. When you install an integration, it essentially runs within the same process space as the core engine. If a poorly written integration for a cheap Wi-Fi light bulb has a memory leak or performs a blocking operation on the main thread, it doesn't just break that light bulb. It can lag the entire event bus. It can make your Zigbee switches unresponsive. It can even crash the supervisor. We saw this in February with that Z-Wave JS update. It wasn't that Z-Wave was fundamentally broken, but the way the update interacted with the core required full system restarts for tens of thousands of users, and for many, it broke automations for forty-eight hours because of how dependencies were handled.

So, it is like having a roommate who forgets to turn off the stove, and suddenly the entire apartment building is condemned. That seems... inefficient. If we are brainstorming a better future, why hasn't the system moved toward something more isolated? Like a microservices approach for the smart home?

That is exactly where the "stable core" conversation starts. Imagine a version of Home Assistant where the core engine—the state machine, the automation engine, and the dash-boarding—is completely firewalled from the integrations. We could call them "Integration Pods." Using something like WebAssembly, or WASM, or even lightweight containers, each integration would run in its own sandbox.

Okay, walk me through the "Integration Pod" idea. If I'm a developer building a new integration for a niche robotic vacuum, how does that change things for me?

In this model, you wouldn't just be writing a Python script that hooks directly into the Home Assistant internals. You would be building a self-contained module that communicates with the core via a strictly versioned, minimal API. The core says, "I accept state updates and I send commands," and that is it. If your vacuum integration starts eating up two gigabytes of RAM because you forgot to close a network socket, the "watcher" in the core sees that the pod is misbehaving and just kills that specific pod. It restarts the vacuum integration without touching the rest of the house. Your lights stay on. Your security system stays armed. The failure is contained.

I like that. It turns a systemic collapse into a minor inconvenience. But I can already hear the "hardcore" users complaining. Doesn't that add a massive amount of overhead? Most people are running this on a Raspberry Pi or a small NUC. If we are running fifty different WASM modules, aren't we going to hit a hardware wall pretty fast?

You are not wrong. There is a "stability tax" in terms of resource usage. Running isolated environments requires more overhead than a single monolithic process. However, look at where hardware is going in twenty-six. We are seeing high-efficiency NPU-integrated chips becoming the standard for home servers. Even the latest low-power boards have enough headroom to handle this if the implementation is lean. And honestly, I think most users would gladly trade ten percent of their CPU overhead for a system that doesn't require a hard reboot once a week. The current model is "efficient" in the same way a house without internal walls is efficient for space—it works great until a fire starts in the kitchen and immediately consumes the bedroom.

Fair point. I'd pay that tax in a heartbeat. But there's another side to this. It's not just about the code crashing; it's about the "logic" crashing. Remember when we talked about the "Agentic Smart Home" and how complex YAML makes everything fragile? Even if the integration is running in a sandbox, if the configuration is a mess, the user experience is still buggy.

That is where the "Device Database" initiative from the Open Home Foundation comes in. One of the biggest drivers of fragility is that Home Assistant often doesn't actually know what a device is. It sees a "sensor" and a "switch," but it doesn't know that those two entities belong to the same physical floor lamp in the nursery. The user has to manually stitch those together. The future of stability lies in "Collective Intelligence." Nabu Casa is building a centralized, community-driven database of device metadata. When you join a device to your network, Home Assistant queries this database and says, "Oh, this is a ThirdReality smart plug, version two. I know exactly how its power monitoring behaves, I know its polling limits, and I know its known bugs."

So the system starts acting more like an appliance and less like a science project. It's pre-configuring itself based on the collective experience of three hundred thousand other users. That feels like it removes the "user error" variable, which, let's be honest, is usually me.

Well, not "exactly," but you've hit on the core shift. It's about moving the burden of knowledge from the individual user to the ecosystem. If the system knows that a specific firmware version of a Hue bridge is buggy, it can proactively warn you or even apply a virtual "patch" to how it handles that bridge's data. This leads naturally into the idea of a "Certified Integration" program.

Now we're getting into the "Works with Home Assistant" territory. I know Nabu Casa has been pushing this, but it always felt a bit like a marketing badge. How would you make that a technical reality for stability?

It needs to be a tiered experience. Right now, when you go to add an integration, you're presented with a list of three thousand options as if they are all equal. They aren't. You have the official Philips Hue integration, which is rock solid, and then you have a reverse-engineered API for a cloud-based cat feeder written by a college student three years ago who has since moved on to other things. We need a "Stable Channel" for the entire OS.

Like a Debian Stable for your house?

Precisely. Users should be able to toggle a switch that says, "Only show me Certified Integrations." To be "Certified," a manufacturer or a lead maintainer has to commit to specific stability benchmarks. They have to provide automated regression testing. They have to prove that their integration handles network latency gracefully. If you're on the Stable Channel, you only get updates that have been "burned in" for two weeks in the beta community.

I can see the appeal for my parents or someone who just wants their house to work. But does that stifle the innovation that made Home Assistant great in the first place? Part of the fun is being able to integrate your weird DIY ESP-thirty-two plant sensor.

You don't lose that. You just decouple it. Think of it like a smartphone. You have the core OS and the "system apps" that are guaranteed to work. Then you have the app store. You can install a random APK from the internet if you want, but the OS warns you that it might be unstable. Home Assistant needs that clear demarcation. Currently, everything is treated as a "system app." If we move toward a model where the "Certified" integrations are the default and the "Community" integrations are explicitly labeled as "Experimental," the expectations of the user change.

It's a psychological shift as much as a technical one. If I install something labeled "Experimental" and it breaks, I'm not mad at Home Assistant; I'm mad at the experimental thing I chose to add. But if the whole system feels like a house of cards, I blame the platform.

And that blame is what prevents mainstream adoption. If Home Assistant wants to move from the basement of the nerd to the living room of the average family, it has to become an appliance. This ties into the "Open Home" philosophy—privacy, choice, and durability. You can't have durability if the system is fragile.

Let's talk about Matter for a second, because that's usually the "magic bullet" people point to for stability. "Just use Matter, and all the integration problems go away!" We're in twenty-six now—is Matter actually delivering on that promise, or is it just another layer of complexity?

Matter is the "universal language" solution to the integration surface problem. Instead of Home Assistant needing two thousand unique codebases to talk to two thousand brands, it uses one robust Matter controller to talk to everything locally. In theory, this shrinks the "failure surface" drastically. Instead of maintaining code for every specific Wi-Fi bulb, you're just maintaining the Matter stack.

"In theory" is doing a lot of heavy lifting there, Herman.

It is. The reality in twenty-six is that while Matter over IP is quite stable, "Matter over Thread" still has these annoying "ghost nodes" and commissioning hurdles. But the shift is happening. By moving toward local, standardized communication like Matter, Z-Wave, and Zigbee, we are cutting out the "Cloud Polling" integrations. Those are the number one cause of "silent breakage." You wake up, and your automation didn't run because a server in Virginia had a five-second hiccup and the integration didn't know how to retry the connection.

I think a lot of people don't realize how much of their "local" smart home is actually just a remote control for a cloud server. When that server goes away, or the company decides to start charging a subscription, the integration breaks. Stable-by-design means "Local-by-default."

And that is a core pillar of the Open Home Foundation. But even with local control, you still need that "context" we mentioned earlier. This is where AI actually plays a role that isn't just hype. Imagine a local LLM—part of the "Assist" initiative—that acts as a system administrator for your house. Instead of you digging through Python logs to find out why the kitchen lights are flickering, the AI analyzes the event bus in real-time. It says, "Hey, I noticed the Philips Hue bridge is responding twenty milliseconds slower than usual, and I see a static IP conflict on your network. I've isolated the bridge to a new IP to prevent a crash."

Now that is a use case for AI I can get behind. Proactive debugging. It's like having a little Herman Poppleberry living in my server rack, but one that doesn't eat all my snacks.

Hey! I resemble that remark. But seriously, the goal is to make the system "self-healing." If we have the sandboxed architecture we talked about, the AI can actually take action. It can say, "The Tuya integration is misbehaving; I'm going to restart its pod and see if that clears the error." That level of automated maintenance is how you get to "Reasonable Stability."

So, we've got a sandboxed architecture, a certified integration tier, local-first protocols like Matter, and an AI-driven self-healing layer. This sounds like a completely different product than the Home Assistant of twenty-twenty.

It is an evolution. And it's one that's already starting. Look at the "Year of the Voice" and the "Year of the Dashboard" projects. Nabu Casa spent those years polishing specific subsystems rather than just chasing the next thousand integrations. They are professionalizing the core. They now have over fifty full-time staff members working on this. That is a huge shift from the early days of community volunteers doing their best.

It's the "professionalization of the hobby." But for the user who is listening to this right now and feeling the "Smart Home Tax"—that feeling that they've essentially taken on a second job as an IT manager for their own house—what can they do today to move toward this stable future?

The first step is a mindset shift: adopt a "Stable Channel" philosophy for yourself. Just because an update is available doesn't mean you should click "install" at six P.M. on a Friday. I know the "new feature" itch is real, but wait. Let the enthusiasts find the bugs. Second, start auditing your integrations. If you have a choice between a cloud-based integration and a "Works via Matter/Z-Wave/Zigbee" version, always choose the latter. You are reducing your dependence on external variables you can't control.

I've started doing that. I actually removed a couple of "cool" integrations recently because I realized I hadn't looked at them in months, and every time I updated HA, I was worried they'd be the thing that broke the boot cycle. It's about "Simplification for Stability."

Minimalism is a feature. Every integration you remove is a reduction in your system's attack surface—not just for security, but for instability. Another practical tip: use the native backup tools. Home Assistant recently added native Google Drive and cloud backups. Treat your smart home like a mission-critical server. If you don't have a verified backup and a one-click rollback plan, you are living on the edge.

And not the "cutting edge," the "falling off a cliff" edge. What about for the developers? If someone is building the next big thing for Home Assistant, how should they be thinking about this "Stable Future"?

Think in terms of isolation. Even if the core hasn't fully moved to "Integration Pods" yet, you can design your code to be as self-contained as possible. Avoid deep dependencies on the internal state of other components. Use the official APIs. And for heaven's sake, write error handling that doesn't just throw a generic exception and die. If your integration can't reach its device, it should fail gracefully, log a clear message, and try again later without blocking the rest of the system.

It sounds like we're advocating for a bit of "boringness" in the smart home. We want the excitement to be in what the automations do, not in whether or not the system will stay up for more than forty-eight hours.

Boring is beautiful when it comes to infrastructure. You don't want your plumbing to be "exciting." You want it to move water from point A to point B every single time you turn the tap. The smart home needs to reach that "utility" status. And I think the roadmap we're seeing—the move toward the Open Home Foundation, the emphasis on local control, the "Device Database"—it all points toward that.

I wonder if there's a world where Home Assistant actually sells a "Pro" version of the software that is pre-locked down. Not a subscription, but a specific "Appliance Image" that only allows the most stable, certified paths.

That is basically what the Home Assistant Green and Yellow hardware are trying to do—provide a curated "out of the box" experience. But the software needs to reflect that too. An "Appliance Mode" toggle in the UI would be a game-changer. Flip the switch, and all the experimental stuff disappears. You're left with a system that is ninety-nine point nine percent reliable.

If I could give that to my brother—well, not you, the other one—or a friend, and know I wouldn't get a "help me" text two weeks later, that would be the ultimate win for the ecosystem. It turns it from a hobby into a recommendation.

And that is how you win the market. You don't win it by having three thousand integrations; you win it by having fifty integrations that never fail. The three thousand are the long tail that makes the platform powerful, but the core stability is what makes it viable.

We've covered a lot of ground here—from WASM-based sandboxing to community-driven device databases. It feels like the "fragility" we complain about is actually just the growing pains of a platform that grew faster than anyone expected.

It is. Home Assistant is the Linux of the smart home. It started as a kernel, then it became a set of tools, and now it's becoming a full-fledged operating system for the physical world. The transition from "enthusiast tool" to "stable infrastructure" is hard, but it's happening. And as Daniel's prompt suggests, it's not just a technical challenge; it's about the ecosystem and the users agreeing on what "stability" actually looks like.

Well, I for one am ready for the "Boring Smart Home" era. I want to spend my weekends actually enjoying my automated lights, not recalibrating them because a Python library decided to update its syntax.

Amen to that. I think we're closer than people realize. The tools are there; the focus is shifting. We just need to keep pushing for that "stable-by-design" philosophy in every part of the stack.

Let's wrap it there for the main brainstorm. I think we've given the "Home Assistant is breaking" crowd some hope that there is a path forward that doesn't involve moving back to manual light switches.

Though I do still enjoy the tactile click of a good physical switch.

Of course you do, Herman. Of course you do.

Before we go, let's look at some practical takeaways for anyone currently struggling with a "fragile" setup. If you're feeling like your Home Assistant instance is one update away from disaster, here is your action plan. First, as we said, adopt the "Stable Channel" mindset. Don't be the first person to install a point-zero release. Wait for the point-one or point-two. Second, prioritize "Works with Home Assistant" certified hardware. It's not just a sticker; it's a commitment from the manufacturer to support the integration you rely on.

And third, advocate for that "Certified Integration" program in the community. The more we as users demand stability over "new features," the more the developers will prioritize it. Support the devs who are doing the unglamorous work of fixing bugs and improving documentation. They are the ones actually building the "Stable Future."

And if you're a developer, look into the "Device Database." Contribute your device's metadata. The more context the system has, the less "guessing" it has to do, and guessing is where bugs live.

I'm feeling surprisingly optimistic about this. It's easy to get bogged down in the "everything is broken" memes, but when you look at the architectural moves being made, the foundation is actually getting a lot stronger.

It is. The "Open Home" is becoming a "Reliable Home." It's just going to take a bit more "sandboxing" to get there.

Well, that's our look at a more stable future for Home Assistant. Thanks as always to our producer Hilbert Flumingtop for keeping the show running—hopefully with more stability than a legacy Tuya integration.

And a big thanks to Modal for providing the GPU credits that power the generation of this show. They make the complex stuff look easy.

This has been My Weird Prompts. If you're enjoying our deep dives into the guts of the smart home, a quick review on your podcast app really does help us out. It's how we find new listeners who are also tired of their kitchen lights being "unavailable" in the app.

Find us at myweirdprompts dot com for the full archive and all the ways to subscribe. We'll see you in the next one.

Stay stable, everyone. Or at least keep your backups current.

Goodbye.

See ya.