Imagine a version of yourself that never sleeps, never gets "hangry," and can sit through a four-hour Zoom board meeting while you are out for a morning stroll. We are not talking about a basic auto-responder or a chatbot that summarizes your emails in a dry, robotic voice. We are talking about a living digital twin—a high-fidelity, interactive replica that doesn't just store your data, but actually mimics your cognitive patterns, your specific cadence, and your unique brand of professional judgment.

It is the ultimate scaling of the self, Corn. And while it sounds like science fiction, the technical convergence we have seen over the last couple of years—specifically between large language models, real-time voice cloning, and behavioral transformer architectures—has moved this from the realm of "what if" to "how do we deploy it." By the way, fun fact for the listeners—Google Gemini three Flash is actually writing our script today, which is fitting given we are talking about digital representations of intelligence.

Today's prompt from Daniel is about these living digital twins, and he specifically wants us to steer clear of the "digital afterlife" stuff—the recreating of deceased loved ones—and focus on the technical frontier of replicating people who are still very much alive and kicking. He is asking which projects have actually moved the needle and how close we have truly gotten to an authentic replica.

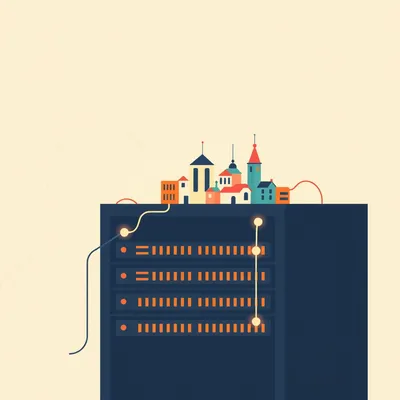

Herman Poppleberry here, ready to dive into the data. This is a massive shift in how we think about identity. Historically, a "digital twin" was a term used in industrial engineering—a virtual model of a jet engine or a wind turbine used to predict maintenance needs. But applying that to a human "system" is infinitely more complex because you aren't just modeling physics; you are modeling personality, memory, and social nuance.

Right, because a jet engine doesn't have a "bad day" or a specific sense of humor that changes based on whether it has had its morning coffee. So, let's look at the landscape. Where did this start to get serious for living subjects? I know we have seen high-profile demos, but what are the actual projects that define this space right now?

The most visible "patient zero" for the modern living twin has to be Reid Hoffman’s REID AI project. Hoffman, the LinkedIn co-founder, worked with several technology partners to create a version of himself that could conduct interviews and answer questions based on decades of his own books, podcasts, and speeches. It wasn't just a RAG system—Retrieval-Augmented Generation—stuck on top of a base model. They were trying to capture his "Reid-ness."

I saw that video. The visual side was impressive, but let's talk about the plumbing. How are they actually building the "brain" of a twin like that? Is it just fine-tuning a model on every word the guy has ever said?

That is the foundation, but it is insufficient on its own. If you just fine-tune on transcripts, the AI learns what you said, but it doesn't necessarily learn how you think or how you would react to a completely new situation. The most significant projects use a three-pillar approach: personality modeling, conversation history synthesis, and behavioral cloning. Personality modeling often involves using something like the Big Five personality traits as a latent framework, then using RLHF—Reinforcement Learning from Human Feedback—where the actual person "ranks" the AI’s responses to ensure the "vibe" is correct.

"The vibe is correct"—spoken like a true tech enthusiast, Herman. But seriously, how do they measure "vibe"? If I'm building a Corn-twin, and it's too energetic or uses too many exclamation points, it's a failure. I move at a very specific, measured pace.

And that is where behavioral cloning comes in. This is a technique borrowed from robotics and autonomous driving. Instead of just teaching the AI language, you are teaching it sequences of actions. For a digital twin, that might mean analyzing ten thousand hours of your meeting footage to see not just what you say, but when you interrupt, how long you pause before answering a difficult question, and which specific metaphors you lean on when you are trying to persuade someone.

You mentioned ten thousand hours. That is a staggering amount of data. Most people haven't even recorded ten thousand hours of themselves talking, let alone have it organized in a way a machine can digest. Does this mean digital twins are currently a luxury for the "over-documented" elite?

Currently, yes. To get a replica that doesn't fall into the "uncanny valley" of personality, you need a massive corpus. But projects like Character.AI have shown that you can get surprisingly far with much less if the prompt engineering and the underlying "persona" architecture are robust. Character.AI reached one hundred million monthly users by late twenty-four because they cracked the code on "persistent identity." Their models don't just answer questions; they maintain a consistent persona over long-term interactions.

But Character.AI is mostly for fictional characters or historical figures where the user is filling in the gaps with their own imagination. When it's a living twin of a real person—say, an executive using it for brand management—the margin for error is zero. If the twin says something out of character, it’s a PR disaster.

Which brings us to the visual and auditory side, which is where Microsoft’s VASA-one framework really changed the game in January twenty-four. Before VASA-one, creating a video of a digital twin required massive rendering power and often looked stiff. VASA-one—which stands for Visual Affective Skills Animator—can take a single static image and an audio clip and generate a high-resolution video of that person talking in real-time. It captures the micro-expressions, the lip-syncing, and even the head movements that make a person look "alive."

I remember the VASA-one demos. There was one of a woman rapping a fast-paced song, and the fluid movement of the facial muscles was hauntingly good. But that’s the "skin." If we go back to the "soul" of the twin, how do we handle the fact that people change? I’m not the same person I was five years ago. My opinions evolve. If a digital twin is based on my historical data, isn't it essentially a frozen snapshot of my past self?

That is the "temporal drift" problem, and it is one of the biggest technical hurdles. A living twin needs a continuous feedback loop. Some of the more advanced internal projects at big tech firms are looking at "active learning" pipelines. Imagine your digital twin is BCC’d on all your emails and listens in on your live meetings. It is constantly updating its internal weightings based on your most recent behavior. It sees that you’ve started using a new piece of slang or that your stance on a certain policy has shifted, and it integrates that change in near real-time.

That sounds like a privacy nightmare, Herman. To have a perfect twin, you have to live in a state of total, permanent surveillance by your own creation. You are essentially feeding yourself into the machine to keep your "mini-me" current. Is there any project that has found a way to do this without the "Total Recall" level of data harvesting?

There is a push toward "edge-based" digital twins. Instead of your data living on a central server at a company like OpenAI or Google, the "twin" is trained and runs locally on your own hardware. This allows for that deep, invasive data collection to happen within a "trusted bubble." But even then, you hit the "continuity of consciousness" wall. The twin might sound like you and look like you, but it doesn't "know" what it feels like to be you. It is a sophisticated statistical mirror.

Let’s get into some specific use cases beyond just "look at this cool demo." Who is actually using this for work? I read about some tech executives using twins for initial email screening. Is that actually happening, or is it just more AI hype?

It is starting to happen in narrow domains. Think of it as the evolution of the Executive Assistant. Instead of an assistant who has to ask you "How would you respond to this?", the digital twin—trained on ten years of your email archives—drafts a response that is ninety-five percent there. You just give it a quick "thumbs up" before it sends. But the real frontier is "delegate-able" agents. We talked about sub-agent delegation in a previous episode, and that is where this gets powerful. Your digital twin isn't just a chatbot; it is a coordinator that can represent your interests in a digital environment.

So, if I have a digital twin and you have a digital twin, can our twins just have this podcast for us while we go get lunch? And more importantly, would the listeners even know?

Technically, we are getting very close to that. If we fed the last one hundred and sixty-some episodes of "My Weird Prompts" into a specialized transformer model, the "Corn-bot" would probably start teasing me about my donkey-nerd tendencies within the first three minutes. But there is still a "spark" of spontaneity that is hard to clone. Current models are great at predicting the "most likely" next word. True personality often lies in the "least likely" but "most human" thing to say—the weird tangent, the unexpected joke, the sudden change in tone.

I like to think my humor is unpredictable, but you’d probably tell me I’m just a very complex set of Sloth-based algorithms. Let’s talk about the "authenticity gap." When you look at a project like the Reid Hoffman twin, where did it feel "fake"? Because if we are going to use these as professional tools, we need to know where the guardrails are.

The gap usually shows up in two places: long-term memory and emotional nuance. Most LLMs have a "context window"—a limit on how much information they can hold in "active thought" at one time. Even with windows of a million tokens or more, the AI can lose the thread of a complex, multi-layered relationship. It might remember your name and your job, but it might forget the specific inside joke you made twenty minutes ago, or the subtle tension you have with a particular colleague. Humans are geniuses at tracking social subtext; AI twins are still mostly tracking text.

And what about the physical "tells"? We’ve talked about VASA-one, but what about the "voice"? Most AI voices, even the good ones from ElevenLabs, still have a certain... perfection to them? They lack the "creaks" and the "uhms" that happen when a human is actually thinking in real-time.

Actually, the "creaks" are being solved. Newer "expressive" speech models can now simulate "vocal fry," laughter, and even the sound of a person taking a breath between sentences. The real issue is the "latency" of thought. When you ask a digital twin a question, there is often a split-second pause while the server processes the request. That "digital silence" is a massive "tell." Humans have a very specific rhythm to their conversation. If the twin responds too fast, it feels like a robot; if it responds too slow, it feels like a bad satellite connection.

It’s a delicate dance. You have to program in the "human imperfection." You literally have to tell the AI to be "less efficient" to be "more human." That feels fundamentally counter-intuitive to how we’ve built technology for the last fifty years.

It is. We are moving from the era of "utility" to the era of "presence." If you want a digital twin to be a professional tool, it has to be able to command a room—or at least a Zoom room. There is a project called "Personal AI" that focuses on creating these "Sovereign AI" models for individuals. They aren't trying to build a general intelligence; they are building a "small language model" specifically tuned to you. Their argument is that a model trained on five million words of your own data is more "you" than a model trained on the entire internet that is just "pretending" to be you.

That’s an interesting distinction. The "Generalist" versus the "Specialist." If I use a general model like Gemini or Claude to act as my twin, it’s basically an actor playing a role. If I use a small, hyper-localized model trained only on me, it’s more like a digital horcrux—a literal piece of my professional identity.

A "digital horcrux"—I love that, Corn. Though hopefully with fewer dark magic implications. But you hit on the "Sovereignty" issue. If your twin is hosted by a major corporation, do you really own yourself? If they change their Terms of Service, can they "lobotomize" your twin? Can they make your twin more "agreeable" to advertisers? These are the questions being raised by groups like Trust Insights. They are looking at the "Ethics of the Clone." If your twin is out there representing you, it needs a "Bill of Rights."

"Don't make my twin a shill for laundry detergent"—that should be the first amendment of the Digital Twin Constitution. But let's look at the practical side for our listeners. If someone is listening to this and thinking, "I want to start building my twin today," what is the actual "State of the Art" for a regular person? Not a billionaire like Reid Hoffman, but a regular professional.

The "State of the Art" for the average person right now is a "Multi-Modal Stack." You would use a service like ElevenLabs for your voice clone—you only need about thirty minutes of high-quality audio for that. You would use something like V-ID or HeyGen for the video avatar. And for the "brain," you would use a "Custom GPT" or a "Claude Project" where you upload your last two years of sent emails, your resume, and maybe some transcripts of your presentations.

And how does it perform? If I set that up, is it actually going to save me time, or am I just going to spend four hours a day "fixing" the weird things my twin said to my boss?

Right now, it’s best for "Asynchronous Presence." It’s great for creating a video of yourself delivering a personalized message to one hundred different clients. It’s great for answering basic FAQs on your website where "you" are the one answering. It is not yet ready for "Synchronous Negotiation." You shouldn't send your twin to negotiate your salary just yet. The AI doesn't have the "skin in the game" that you do. It doesn't feel the consequences of a bad deal, so it can't simulate the "gut feeling" that tells a negotiator to walk away.

"Skin in the game"—that’s the missing ingredient. The twin has the data, but it doesn't have the "risk." It can't feel embarrassment, it can't feel ambition, and it certainly doesn't care if it gets fired. It’s a tool, not a teammate.

And that is the "Authenticity Gap" we mentioned. Authentic human behavior is driven by biological imperatives—fear, hunger, social status, love. Digital twins are driven by "token probability." We can simulate the "output" of human emotion, but we haven't yet simulated the "input" of human experience.

So, we are building very convincing masks. But the masks are getting so good that, in a digital-first world, the difference between the mask and the face might start to disappear. If most of my professional interactions happen over text or short video clips, and my twin can handle eighty percent of those, then for eighty percent of the world, my twin is the "real" me.

That is the "Being Human in twenty thirty-five" prediction. Some experts believe that within a decade, having a digital twin will be as standard as having a LinkedIn profile. It will be your "Digital Front Office." You will have a "Public Twin" that handles the noise, a "Professional Twin" that handles the work, and your "Actual Self" will be reserved for high-value, deep-focus, or personal interactions. It’s a way of reclaiming your time from the "digital treadmill."

Or it’s a way of ensuring the treadmill never stops. If everyone has a twin that can talk twenty-four-seven, the volume of digital noise is going to explode. We’ll have twins talking to twins, CC’ing other twins, while the humans are all just trying to find a quiet place to have a nap.

It’s the "Dead Internet Theory" but with our own faces on it. But there is a positive angle here, too. Digital twins can be a tool for "Self-Reflection." When Reid Hoffman interviewed his AI twin, he said it challenged him because the AI would bring up points he had made ten years ago that he had forgotten, or it would connect two ideas from his different books in a way he hadn't considered. It’s like having a perfectly objective version of your own brain to bounce ideas off of.

I don’t know if I want an "objective version" of my brain. My brain is a messy, subjective place, and that’s where the good stuff happens. If I wanted objectivity, I’d buy a calculator. But I see the point. It’s "Personal Knowledge Management" with a personality.

And that is the "Behavioral Cloning" frontier. We are seeing researchers move away from just "Language Models" toward "Action Models." There is a project called "A-G-I-One" that is looking at how to map a person’s "workflow" into a digital twin. It doesn't just know what you say; it knows how you use your computer. It knows that when you get a certain type of invoice, you open Excel, run a specific macro, and then cross-reference it with a PDF. That "Action-Oriented" twin is much more useful than a "Talking Head" twin.

That sounds more like a "Super-Macro" than a "Digital Twin." I think we need to be careful with the branding here. If it’s just an automation script, call it an automation script. A "Twin" implies a level of "Personhood," doesn't it?

It does, and that’s the "C-I-O" or "Chief Identity Officer" role that some people are predicting companies will need. Who manages the "Official Version" of the CEO’s twin? Who ensures it isn't "hallucinating" new company policies? This is why the "Living Twin" use case is so much more technically demanding than the "Deceased Twin" one. If a "Ghost Twin" says something weird, it’s a glitch. If a "Living Twin" says something weird, it’s a liability.

Let’s talk about the "10,000-hour threshold" again. If that is the gold standard for a "convincing" replica, what are the projects doing to bridge the gap for people who don't have that data? Is there a "Generic Personality" template that you can just "skin" with your own voice?

That is essentially what the "Character" models do. They start with a "Base Human" model that understands common sense, social norms, and basic emotions. Then, they apply a "Delta"—the specific differences that make you "you." It’s like a digital "caricature." A caricature doesn't include every single detail of your face; it highlights the three or four most prominent features. Digital twins right now are "behavioral caricatures." They capture your most frequent phrases, your biggest opinions, and your most obvious quirks.

So, my twin would basically just be a Sloth that asks "But why though?" every five minutes and makes obscure references to nineteen-nineties indie films?

I mean, that is basically your "brand," Corn. It would be eighty percent of the way there.

Fair point. But let’s look at the "Practical Takeaways" for someone who is skeptical but curious. If you wanted to start "narrow," where is the actual value right now?

The first takeaway is "Domain-Specific Replication." Do not try to build a "Full Life Twin" yet. Start with a "Meeting Twin" or an "Email Twin." Focus on a narrow corpus of data where you have high-quality records. If you have five hundred hours of you teaching a specific subject, build a twin for that subject. The narrower the domain, the higher the "Authenticity."

That makes sense. It’s like "The Expert Version" of you. Takeaway number two?

"Multi-Modal Synergy." The most convincing twins are not just text. They are the combination of a fine-tuned LLM for the "brain," a high-fidelity voice clone for the "voice," and a motion-capture avatar for the "body." If you only do one, the "Uncanny Valley" is too wide. When you combine them, the brain starts to fill in the gaps and "accepts" the replica as a person.

And takeaway number three for me would be "The Feedback Loop." If you are going to use a twin, you have to be the "Editor-in-Chief." You cannot just "set it and forget it." You have to spend time interacting with your own twin, correcting its mistakes, and "pruning" its behavior. In a weird way, building a digital twin makes you more aware of your own habits. You start to see your own "repetitive loops" when you see them mirrored back to you by an AI.

It’s the ultimate "know thyself" exercise. You realize how often you use the word "actually" or how predictable your reactions are. It’s humbling, really.

Humbling, or terrifying. "Oh no, I really am that boring." But let's look at the "Open Questions" as we wrap this up. Where does this go in three to five years? Are we going to see "Twin-as-a-Service" platforms where you just pay a monthly fee to have your "Professional Self" managed?

I think we will see "Personal Model Hosting." Just like you host a website today, you will host your "Model." And the big question is: will these twins eventually be granted "Agency"? Will they be allowed to sign contracts? Will they be allowed to vote in corporate elections? If a twin is a perfect replica of your "Professional Judgment," why shouldn't it be allowed to exercise that judgment on your behalf?

Because a twin doesn't go to jail if it commits fraud, Herman. That is the "Legal Moat" that I think will keep digital twins as "assistants" rather than "replacements" for a very long time. You need a "Physical Person" to hold the "Legal Bag."

That is a very "Sloth-like" and grounded observation. The legal framework is definitely lagging behind the generative framework. But the technology isn't waiting. Whether you are ready for it or not, your "Digital Shadow" is getting more detailed every day. The question is whether you want to be the one to "step into" that shadow and direct it, or just let the algorithms piece you together from the "data exhaust" you leave behind.

I think I’ll stay in the sun for a bit longer and let the shadow do the boring stuff. But it’s wild to think that "authenticity" is now something we can "engineer." We’re moving from "genuine" to "synthetically authentic."

It’s a brave new world of "Personalized Presence." And for those who want to dive deeper into how these twins might actually "do" things, I’d suggest checking out our previous discussion on "Sub-Agent Delegation." It’s the "engine" that will eventually drive these "bodies."

Well, this has been a fascinating look at the "Mirror World." I’m going to go check if my digital twin has already finished my chores. Spoiler alert: he hasn’t, because he’s just as lazy as I am.

If he were a true twin, he’d be taking a nap right now.

Or, as you aren't allowed to say, "precisely." Big thanks to our producer, Hilbert Flumingtop, for keeping the gears turning behind the scenes.

And a huge thank you to Modal for providing the GPU credits that power the generation of this show. We couldn't do these deep dives into the technical frontier without that horsepower.

This has been "My Weird Prompts." If you are enjoying our brotherly banter and AI-powered deep dives, do us a favor and leave a quick review on your podcast app. It genuinely helps us reach more curious minds.

You can find us at myweirdprompts dot com for the full archive and all the ways to subscribe.

Catch you in the next one. Unless my twin does it for me.

Goodbye, everyone.