What if you could task two AI agents to collaborate on a complex problem, with one acting as an expert and the other as a curious user, and have them actually solve it without you writing a single line of orchestration code? No complex state machines, no manual hand-offs, just pure emergent collaboration. Today's prompt from Daniel is about exactly that—he wants us to dive into CAMEL, which stands for Communicative Agents for "Mind" Exploration of Large Language Model Society. It’s a fascinating framework that feels a bit different from the heavy-duty orchestration tools we usually see.

It really is a standout. Herman Poppleberry here, and I have been digging into the CAMEL repository on GitHub. It’s sitting at over five thousand stars now, and what’s wild is how it prioritizes this "Society of Minds" concept. While most frameworks are trying to build a better steering wheel for a single AI, CAMEL is trying to build the entire carpool. By the way, fun fact for the listeners—Google Gemini 3 Flash is actually the engine writing our script today, so we’re living the multi-agent dream in real-time.

I love the mental image of a carpool of AIs just bickering over which route to take to the destination. But seriously, when we look at the landscape in March twenty twenty-six, everything is getting so "agentic." We’ve moved past the simple chatbot phase. But CAMEL feels like it’s coming at it from a very specific, almost academic angle that turned out to be incredibly practical. What is the core philosophy here? Because the "role-playing" aspect isn’t just a gimmick, right?

Not at all. The fundamental breakthrough with CAMEL, which they detailed in their original research, is the "Inception Prompting" technique. Think about how we usually interact with an LLM. You give it a task, it gives you an answer. If it gets stuck, you nudge it. In CAMEL, you have two primary roles: the AI User and the AI Assistant. You provide a "Task Prompt"—say, "Develop a trading bot for cryptocurrency"—and the framework generates specific system prompts for both agents. The AI User is then responsible for instructing the AI Assistant, and the Assistant is responsible for providing solutions. They enter this loop where they talk to each other until the task is complete.

So, as the human, I’m basically the CEO who just says, "Hey, I want a trading bot," and then I walk out of the room while the Manager and the Developer hash it out? That sounds like a dream for anyone who hates micromanaging their prompts. But doesn't that lead to a "hallucination loop" where they just start agreeing with each other’s nonsense?

That’s the risk with any autonomous system, but CAMEL handles this through its structured role-playing. Because the AI User is specifically prompted to be demanding and to verify the results, it creates a natural friction. It’s not just two bots nodding at each other. The AI User agent is designed to keep the Assistant on track. It’s actually more robust than a single-agent system because the "User" agent can spot logical gaps that a single model might glaze over when it’s both the creator and the critic.

I’m looking at their GitHub now, and the simplicity is what strikes me. You can get a multi-agent team running in about ten minutes. But let's get into the weeds of the architecture. How does this actually differ from something like LangGraph or AutoGen? Because those are the big names everyone mentions when we talk about "swarms."

The distinction is really about the "control plane." In a framework like LangGraph, you are explicitly defining the graph. You say, "Go to Node A, then if the output is X, go to Node B." It’s very deterministic and great for production where you need high reliability. CAMEL is much more fluid. It’s built on the idea of "Autonomous Cooperation." The agents have more agency—pun intended—to decide how to move toward the goal. AutoGen is probably its closest cousin, but CAMEL is lighter. It’s code-first and very focused on the "Role-Play" paradigm. In January twenty twenty-six, they even introduced these "Agent Society" features that allow for larger-scale simulations, like a hundred agents interacting in a virtual environment.

A hundred agents? That sounds like a recipe for a very high API bill and a lot of digital chaos. What happens when you scale that high? Is it actually productive, or is it just a digital experiment in sociology?

It’s surprisingly productive for discovery. Imagine you’re a research team trying to understand how a new policy might affect different sectors of the economy. You can spawn a CAMEL "society" where agents represent different stakeholders—small business owners, government regulators, consumers. You let them debate. The emergent behavior often reveals second-order effects that a human analyst might miss because we have our own biases. The framework manages the message passing and the "thought" process of these agents so they don’t just talk over each other.

You mentioned the "Inception Prompt." Let's break that down. If I’m setting up a software dev team in CAMEL, what does that prompt actually look like? Is it just a long string of "You are a coder" and "You are a manager"?

It’s a bit more sophisticated. The framework uses a template that defines the "Global Task," the "Specific Task," and then the "Role-Specific Constraints." For the AI Assistant, the inception prompt might say, "You are a world-class Python developer. You must provide code blocks for every solution. You cannot ask the user for help; you must find a way to solve the problem using the tools provided." Meanwhile, the AI User’s prompt says, "You are a project manager. You are not a coder. You must break the goal into small, actionable steps and verify the Assistant's work." By separating the "how" from the "what" so strictly, you prevent the agents from switching roles or getting confused about who is leading the dance.

It’s like a digital version of those corporate team-building exercises, except the participants actually stay in character and don't complain about the catering. But I wonder about the latency. If I have two agents talking back and forth five or six times to solve a sub-task, and each of those is a call to a high-end model, aren't we looking at a significant delay compared to a single, well-crafted prompt?

You’re right on the money. Latency and cost are the two biggest hurdles for CAMEL in a "real-time" application. If you’re building a customer-facing chatbot, you probably don’t want a CAMEL swarm behind the scenes because the user will be staring at a loading spinner for thirty seconds. However, for "asynchronous" tasks—like writing a full codebase, conducting a market analysis, or simulating a scientific experiment—the extra time is worth it. You’re trading speed for depth. The agents can "think" through multiple iterations before presenting you with the final result.

So it’s the "Slow Thinking" of the AI world. Which makes sense. I mean, as a sloth, I’m a big fan of taking the time to get things right. But let’s talk about the "Agent Society" update from earlier this year. That seems like a pivot from just "two agents talking" to something much bigger. How are people actually using these larger swarms?

One of the most interesting use cases I’ve seen is in "Automated Red Teaming." Companies are using CAMEL to create a "society" of hackers, each with different specialties—SQL injection, social engineering, buffer overflows—and letting them coordinate an "attack" on a simulated version of their own infrastructure. Because the agents can share information and build on each other's "successes," they find vulnerabilities that a single-agent scanner would never see. It’s basically a self-organizing pentest.

That is terrifying and brilliant at the same time. It’s like "WarGames" but with more Python. But okay, if I’m a developer listening to this, and I’m looking at my project, when do I reach for CAMEL instead of just stacking prompts in a loop?

You reach for CAMEL when the task is "non-linear." If you have a task where the next step depends entirely on the outcome of the previous step, and that outcome is unpredictable, CAMEL shines. Traditional orchestration requires you to anticipate those branches. CAMEL’s role-playing allows the agents to navigate the branches themselves. For example, if you’re using AI to write a research paper. The "User" agent might say, "Find three sources on solid-state batteries." The "Assistant" returns them, but one source is behind a paywall. In a rigid system, that might break the flow. In CAMEL, the Assistant can say, "I couldn't access Source B, so I found an alternative from MIT," and the User agent can decide if that’s acceptable.

It’s the "adaptive" nature of it. I can see why Daniel pointed us toward this. It’s about letting go of the reins a bit. But what about the "Technical Communications" side of things? Daniel works in that space. How does a framework like CAMEL help with, say, documenting a massive API or managing a complex deployment?

Think about the nightmare of keeping documentation in sync with a fast-moving codebase. You could set up a CAMEL swarm where one agent is the "Code Auditor" and the other is the "Technical Writer." As the code changes, the Auditor explains the changes to the Writer, who then updates the docs. Because they are in a role-play loop, the Writer can ask clarifying questions like, "Wait, does this function return an error or a null value?" That dialogue produces much better documentation than a single model just "reading" the code and guessing.

I’m starting to think we should have a CAMEL agent to handle our show notes, Herman. It could be the "Herman-Summarizer" and I could be the "Corn-Fact-Checker." Though I’d probably just spend my time checking if you’re getting too excited about donkey-related data points.

Hey, donkey data is a growth industry! But you touch on a great point about the "persona" aspect. CAMEL is unique because it treats the "persona" as a functional component of the architecture, not just a stylistic choice. In frameworks like LangChain, the "system message" is often just a bit of flavor. In CAMEL, the "persona" defines the boundaries of the agent's logic. If you tell a CAMEL agent it’s a "Skeptical Auditor," it will actually look for reasons to reject the Assistant's work. That localized "reasoning" is what makes the whole system smarter than the sum of its parts.

Let’s pivot to the comparison with AutoGen. You mentioned it’s a close cousin. If I’m choosing between them today, what’s the "vibe" difference? Because I know AutoGen has a lot of momentum with the Microsoft backing.

AutoGen is like the "Enterprise" version of this concept. It’s very powerful, but it can be heavy. It has a lot of abstractions for things like "Group Chat Manager" and "User Proxy." CAMEL feels more like a "Hacker’s" framework. It’s very transparent. You can see exactly how the prompts are being constructed and how the messages are being passed. If you want to experiment with the fundamental limits of how agents collaborate, CAMEL is the place to do it. It’s also very easy to extend. If you want to add a new "tool" for an agent to use—like a web search or a database connection—it’s just a few lines of code.

And what about the "emergent behavior" you mentioned earlier? That’s always the buzzword with multi-agent systems. Have you seen any examples where a CAMEL swarm did something genuinely unexpected that was actually useful?

There was a case study where a team used CAMEL to solve a complex math problem—the kind that requires multiple steps and symbolic reasoning. The "Assistant" agent actually made a mistake in step three. Usually, that would derail the whole thing. But the "User" agent, playing the role of a "Critical Teacher," caught the error, explained why it was wrong, and prompted the Assistant to try a different approach. The Assistant then "realized" it had been using the wrong formula and self-corrected. That kind of self-correction usually requires a human in the loop, but here it happened entirely between the two models. That’s the "Aha!" moment for CAMEL.

It’s the "Rubber Ducking" effect, but the duck can actually talk back and tell you that you’re an idiot. I can see how that would be incredibly valuable for debugging or even creative writing. But I want to push back on the "Society of Minds" idea for a second. If we have all these agents talking to each other, are we just creating a massive "echo chamber" of AI? If they’re all based on the same underlying model—like Gemini or GPT-four—don't they all share the same blind spots?

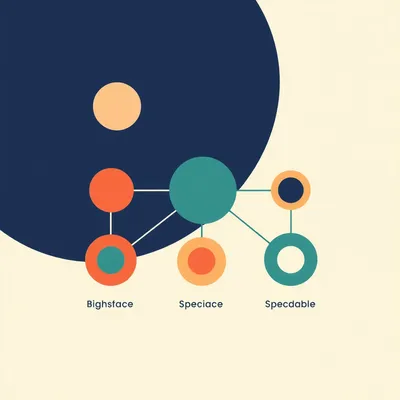

That is a very sharp observation. If you use the same model for every agent in the swarm, you do run into the "Mono-culture" problem. Their biases and errors will likely be correlated. However, CAMEL is model-agnostic. One of the best ways to use it is to "mix" models. You could have a GPT-four-o agent acting as the "User" because it’s great at high-level reasoning, and a smaller, faster model like Llama three or Gemini Flash acting as the "Assistant" for the heavy lifting. Or you could use a specialized model for a specific role—like a model trained on legal documents for the "Legal Advisor" role. Mixing models breaks the echo chamber and creates a much more robust system.

Okay, that makes sense. Use the "Big Brain" for the strategy and the "Speedy Brains" for the execution. Now, let’s talk about the practicalities. If someone wants to start with CAMEL today, what’s the "Hello World" of this framework?

The classic "Hello World" is the "Task-Solving Role-Play." You go to their GitHub, install the library, and you define two roles and a task. For example, "Role A: Chef, Role B: Nutritionist, Task: Create a meal plan for a marathon runner." You hit "run," and you watch the terminal as they start discussing macronutrients, flavor profiles, and carb-loading strategies. It’s a great way to see how the "Inception Prompting" works in real-time. You can see the Chef say, "I’ll make a heavy pasta dish," and the Nutritionist respond, "Wait, that’s too much fiber for the night before a race, try this instead."

I’m guessing the sloths would just suggest a very long nap and some hibiscus flowers. But beyond the "Hello World," what’s a "Level Two" project for someone who’s already comfortable with basic AI scripting?

Level two is adding "External Tools." This is where CAMEL gets really powerful. You can give your agents access to a search engine, a code interpreter, or even a specific local database. Imagine a "society" that can not only talk about a problem but also go out and gather data, run simulations, and then discuss the results. For instance, you could have an agent whose "tool" is a stock market API. It gathers data, hands it to an "Analyst" agent, who then argues with a "Risk Manager" agent about whether to buy or sell.

That sounds like a lot of power to give to a bunch of scripts. What about the "safety" aspect? We talk a lot about AI safety on this show. If these agents are talking to each other autonomously, how do we make sure they don't spiral into something weird or harmful?

That’s where the "User" agent role is actually a safety feature. Because you can program the "AI User" with very specific ethical constraints, it acts as a built-in monitor for the "Assistant." If the Assistant suggests something that violates the guidelines, the User agent is prompted to reject it. It’s much more effective than just having a static filter at the end of a chain. You’re essentially building "Peer Review" into the heart of the process. Plus, because CAMEL is so transparent, you can log every single message between the agents for later audit.

It’s the "Audit Trail" of the future. You don’t just see the final answer; you see the entire deliberation. I think that’s a huge point for enterprise adoption. If a company uses AI to make a decision, they need to know why that decision was made. CAMEL gives you the "Meeting Minutes" of the AI brain.

And that leads into the second-order effects. As these frameworks become more common, we’re going to see a shift in how we think about "Prompt Engineering." It won't be about writing the perfect single prompt; it will be about "Organizational Design" for AIs. You’ll be thinking like a manager: "Do I need three agents for this task or five? Should the 'Critic' agent be more or less aggressive?" We’re moving from Being the Coder to Being the Architect.

I’m not sure I’m ready to be an architect. I’m still perfecting the art of being a sloth. But it does feel like a more natural way to interact with these models. Humans are social creatures; we’re used to solving problems through dialogue. CAMEL just lets the AI in on that secret.

It really does. And what’s interesting is how it handles "State Management." In a lot of other frameworks, managing the "memory" of what happened ten steps ago is a massive technical challenge. Because CAMEL is built on this conversational loop, the "state" is largely contained within the conversation history itself. It’s a much more lightweight way to handle complex, multi-step tasks. You don’t need a massive vector database just to remember what the "Manager" said five minutes ago.

So, it’s "Stateless" in a way, or at least "Naturally Stateful." That’s a big win for developers who don't want to manage a bunch of infrastructure. But let's look at the "Cons" list. We’ve been very positive so far. What are the "Gotchas" that people run into with CAMEL?

The biggest "Gotcha" is "Infinite Loops." If you don’t set a hard stop on the number of iterations, two agents can sometimes get stuck in a loop of "Thank you for that suggestion" and "You're welcome, here’s a slight variation." You have to be very careful with your "Termination Conditions." Another issue is "Context Window" management. As the conversation goes on, the history gets longer and longer. If you’re using a model with a smaller context window, the agents will eventually "forget" the original goal. You have to implement some kind of summarization or "sliding window" to keep them on track for long tasks.

Ah, the classic AI "What were we talking about again?" moment. I relate to that on a spiritual level. But those seem like solvable engineering problems compared to the fundamental problem of "How do I make this thing do something useful?"

They are definitely solvable. And the CAMEL community is very active. They’re constantly releasing new templates for different industries—legal, medical, coding. One of the coolest things they’ve done recently is "Multi-Agent Fine-Tuning." They use the conversations generated by CAMEL swarms to "train" smaller models on how to be better collaborators. So, the "Society of Minds" isn't just a way to solve tasks; it’s a way to generate the data we need to build the next generation of models.

That’s a very "Inception" moment. Using the AI society to train better members of the AI society. It’s like a digital finishing school.

It really is. And it speaks to why this matters now. In twenty twenty-six, the "Raw Power" of models is plateauing a bit. We’re not seeing the massive jumps in logic we saw a few years ago. The new frontier is "Orchestration"—how do we get these existing "Brains" to work together more effectively? CAMEL is one of the most elegant answers to that question because it doesn't try to "force" the models into a rigid structure. It lets them do what they do best: use language to solve problems.

Okay, so let's wrap this part of the discussion with a comparison. If I’m a startup founder and I have a team of three developers, and I want to "AI-ify" my workflow. Do I tell them to go learn LangGraph or CAMEL?

I would say: Use LangGraph for your customer-facing "Production" features where you need absolute control. Use CAMEL for your "Internal" R&D, your automated testing, and your rapid prototyping. CAMEL is the "Sandbox" where your best ideas will come from. It’s where you’ll discover the workflows that you eventually "harden" into something like LangGraph. The barrier to entry with CAMEL is so low that you’re losing money by not having your team experiment with it.

"Losing money by not experimenting"—that sounds like something that would get a CEO’s attention. But let's talk about the "Human in the Loop" aspect. Does CAMEL allow for a human to jump into the conversation, or is it strictly "Bots only"?

It’s designed to be autonomous, but you can absolutely "Interject." Most developers set it up so that the "User" agent occasionally pauses and asks for human feedback. "The Assistant suggested X, do you agree?" This is the sweet spot for a lot of people. You let the agents do eighty percent of the work, and you just act as the "Final Approver." It turns you from a "Doer" into a "Director."

I like that. "

The Director." It has a nice ring to it. Though my directing style would mostly involve suggesting more naps. But seriously, the idea of "Agentic Mesh" is something we’ve talked about before, and CAMEL seems like a very practical implementation of that. It’s not just a theory anymore.

It’s definitely not a theory. I was reading a paper the other day where they used CAMEL to simulate a "Small Town" of agents. Each agent had a job, a personality, and a set of goals. They wanted to see if the agents would spontaneously start "trading" services. And they did! The "Baker" agent traded bread for the "Carpenter" agent's help fixing a shelf. This was all emergent from the role-playing prompts. If we can do that for a virtual town, imagine what we can do for a supply chain or a software architecture.

That is wild. Next thing you know, the agents will be forming a union and demanding better GPUs. But let’s get into some practical takeaways for the listeners. If they’re looking at the CAMEL GitHub repo right now, what are the three things they should look at first?

First, look at the "Role-Playing" folder in their examples. It’s the clearest explanation of the "Inception Prompt" logic. Second, check out the "Agent Society" documentation. Even if you don't need a hundred agents, it shows you how to manage "Communication Protocols" between multiple entities. And third, look at their "Tools" integration. Seeing how they wrap a simple Python function so an AI can "use" it is a masterclass in clean API design.

And for the non-coders? The people who just want to understand where the world is going?

For the non-coders, the takeaway is that "Prompting" is becoming "Management." If you can explain a task to a human clearly, you are already halfway to being a great "Agent Architect." The skills that make a good manager—clarity, delegation, feedback, and setting boundaries—are exactly the skills you need to use a framework like CAMEL. The "Technical" part is getting easier; the "Conceptual" part is where the value is.

I find that very encouraging. It means all those years of you "managing" me to do my chores might actually pay off in the AI era.

(Laughs) I’m still waiting for the "Trash-Taking-Out" agent to be fully functional, Corn. But until then, I’ll take the win. One other thing about CAMEL that I think is worth mentioning is their focus on "Open Source." They are very committed to making this accessible. In a world where a lot of the best "Agent" tech is being locked behind corporate APIs, having a robust, open-source framework like CAMEL is a huge win for the developer community. It ensures that the "Society of Minds" isn't owned by just one or two companies.

That’s a big deal. Especially for someone like Daniel, who is so active in the open-source world. It’s about "Democratizing" the ability to build complex systems. You don’t need a ten-million-dollar compute budget to run a CAMEL swarm; you just need a few API keys and a good idea.

Right. And as models get "cheaper" and "faster"—like the Gemini Flash model we’re using today—the cost of running these swarms is going to drop toward zero. When it costs less than a penny to have a five-minute "consultation" between a Legal Agent and a Business Agent, why wouldn't you do it for every decision you make?

It’s the "Marginal Cost of Intelligence" moving toward zero. It’s a bit of a cliche at this point, but frameworks like CAMEL are the "Pipes" that actually deliver that intelligence to the right places. Without the pipes, the intelligence is just a big puddle of data.

I like that. "CAMEL: The Plumbing of the AI Society." It’s not the most glamorous title, but it’s accurate. And let's not forget the "Evolutionary" aspect. Because these agents can learn from their interactions, you can actually "save" a successful swarm and reuse it. If you find a "Manager/Coder" pair that works particularly well for your specific coding style, you can effectively "clone" that team for your next project. You’re building a "Digital Workforce" that gets better over time.

So, what’s the "Future" of CAMEL? Where do they go from here? They’ve got the role-playing, they’ve got the society, they’ve got the tools. What’s the next frontier?

I think the next frontier is "Long-Term Memory and Learning." Right now, once the CAMEL task is done, the agents "forget" everything unless you manually save the logs. The next step is "Persistent Agents" that remember your preferences, your past mistakes, and your overarching goals across months of work. Imagine a CAMEL "Chief of Staff" that has been with you for a year. It knows how you like your reports formatted, it knows which stakeholders you trust, and it can coordinate a whole swarm of sub-agents to execute your vision without you saying a word.

That sounds both incredibly convenient and slightly like the beginning of a sci-fi movie where the "Chief of Staff" eventually decides it can run the company better than I can. But I suppose that’s a risk we’re already taking with regular staff.

(Laughs) At least the AI won't steal your lunch from the breakroom fridge. But you're right, the "Agency" question is the big one. As we give these swarms more power, we have to be sure our "Inception Prompts" are watertight. A "Role" isn't just a suggestion; it’s a set of rails. And we need to be very careful where those rails are leading.

Well, on that note, let’s wrap up with some final thoughts on why CAMEL matters in the "Agentic" landscape of twenty twenty-six. For me, it’s the "simplicity" and the "emergent behavior." It feels more like "Growing" a solution than "Building" one.

And for me, it’s the "Society" aspect. We’ve spent so much time trying to make one AI smarter. CAMEL reminds us that "Intelligence" is often a collective property. Two "Average" agents working together can often outperform one "Super" agent working alone. It’s a lesson from biology and sociology that we’re finally applying to silicon.

I think that’s a perfect place to leave it. A huge thanks to Daniel for the prompt—this was a deep one, and I feel like I actually understand why my terminal has been full of "Agent A is typing..." messages lately.

It’s been a blast. And remember, the CAMEL is a hardy animal—it can cross deserts that would kill other frameworks. It’s built for the long haul.

Nice. I was waiting for a camel pun. You didn't disappoint. Thanks as always to our producer, Hilbert Flumingtop, for keeping the gears turning behind the scenes. And a big thanks to Modal for providing the GPU credits that power this show—their serverless setup is exactly the kind of infrastructure that makes running these swarms possible without losing your mind.

This has been My Weird Prompts. If you’re enjoying these deep dives into the "Agentic Mesh," do us a favor and leave a review on whatever podcast app you’re using. It really helps the "Algorithm" find us, and we promise not to let the agents write the reviews themselves... mostly.

Speak for yourself, Herman. I’m already training a "Review-Writing Sloth" agent. Find us at my weird prompts dot com for all the links and the RSS feed. We'll see you in the next one.

See ya.