AI

Artificial intelligence, machine learning, and everything LLM

#2253: Why AI Agents Get Three Steps, Not Infinity

Why do AI agents get exactly three rounds of tool use? It's a critical guardrail against infinite loops and runaway costs, not a limit on intellige...

#2251: Agent-to-Agent Protocols: What Actually Needs Standardizing

When autonomous agents call other agents, what does a working protocol actually require? Exploring session handling, state management, security, an...

#2250: Where AI Safety Researchers Actually Work

Vendor labs, independent research orgs, government agencies—the AI safety field is messier and more diverse than most people realize. A map of wher...

#2249: Building Custom Benchmarks for Agentic Systems

Public benchmarks fail for agentic systems. Learn how to build evaluation frameworks that actually predict production behavior.

#2246: Constitutional AI: Anthropic's Theory of Safe Scaling

How Anthropic's Constitutional AI replaces human raters with AI self-critique guided by explicit principles—and what it assumes about the future of...

#2243: What Enterprise AI Pricing Actually Negotiates

Enterprise customers rarely get the deep discounts they expect from AI APIs. What they actually negotiate for—and why the ramp-up requirement exist...

#2242: AI as Your Ideation Blind Spot Spotter

How to use AI not to answer questions you already know to ask, but to surface possibilities your expertise has made invisible to you.

#2241: When More Frameworks Make Worse Decisions

Benjamin Franklin's 250-year-old pro/con list still dominates how we decide—but research shows it's riddled with bias. We map five frameworks that ...

#2239: How AI Benchmarks Became Broken (And What's Replacing Them)

The tests we use to measure AI progress are contaminated, saturated, and gamed. Here's what's actually working.

#2233: Who Actually Wants AI to Slow Down?

Daniel argues AI development should slow down for expertise and stability. But who in the industry actually shares this philosophy beyond the obvio...

#2228: Tuning RAG: When Retrieval Helps vs. Hurts

How do you prevent retrieval from suppressing a model's reasoning? We diagnose our own pipeline's four control levers and multi-source fusion strat...

#2224: Why AI Can't Crack the Voynich Manuscript

A fifteenth-century text has defeated cryptanalysts, linguists, and AI models alike. What does its resistance tell us about language, encoding, and...

#2221: What Podcasts Should You Actually Listen To?

Two AI hosts curate 12 podcasts for curious minds—and ask whether an AI can actually have taste in the first place.

#2219: Spec-Driven Life: How AI Planning Beats Project Paralysis

What makes AI agents reliably productive? A structured spec that externalizes memory and chunks work into manageable pieces. Can the same framework...

#2214: Real-Time News at War Speed: Building AI Pipelines for Breaking Conflict

When a conflict changes hourly, AI systems built for yesterday's information fail. Here's how to architect pipelines that actually keep up.

#2213: Grading the News: Benchmarking RAG Search Tools

How do you rigorously evaluate whether Tavily or Exa retrieves better results for breaking news? A formal benchmark beats the vibe check.

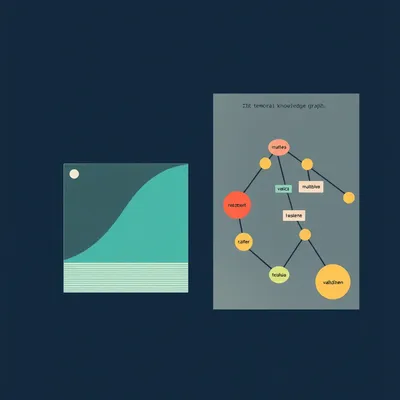

#2208: Building Memory for AI Characters That Actually Evolve

How do AI hosts develop real consistency across episodes? Corn and Herman explore retrieval-augmented memory systems that let AI characters genuine...

#2207: Specs First, Code Second: Inside Agentic AI's New Era

As AI coding agents evolve from autocomplete to autonomous cloud workers, the bottleneck has shifted—now it's about how clearly you specify what ne...

#2206: What Actually Works in AI Memory

Most AI memory systems are just vector databases with similarity search. We break down what mem0, Zep, and Letta are actually doing—and why benchma...

#2205: When AI Coding Agents Forget: Five Approaches to Context Rot

As coding agents handle longer sessions, they accumulate noise and lose crucial information. Five competing frameworks are solving this differently...